The whole industry needs rebuilt from the foundations. GRTT with a grading ring that tightly controls resources (including, but not limited to RAM) as the fundamental calculus, instead of whatever JS happens to stick to the Chome codebase and machine codes spewed by your favorite C compiler.

If one of us ever wins the lotto we better get on funding that

If someone wants to collab, I’ve been writing various codes around it: https://gitlab.com/bss03/grtt

Right now, it’s a bunch of crap. But, it’s published, and I occasionally try to improve it.

Also, Granule and Gerty are actual working implementations, tho I think some of the “magic” is in the right grading ring for the runtime, and they and more research oriented, allowing for fairly arbitrary grading rings.

It took me a long time to figure out that “GRTT” is “Graded Modal Type Theory”. Letting others know, if they want to look into it further.

Sorry. I didn’t pick the acronym, it comes from the paper: https://arxiv.org/pdf/2010.13163.pdf I’m not sure why there’s no “M” in the acronym, but I should probably spell things out when I actually want collaborators.

While I’m dropping links, I will also drop https://github.com/granule-project/ where Gerty and Granule live and where real research is done.

You do really feel this when you’re using old hardware.

I have an iPad that’s maybe a decade old at this point. I’m using it for the exact same things I was a decade ago, except that I can barely use the web browser. I don’t know if it’s the browser or the pages or both, but most web sites are unbearably slow, and some simply don’t work, javascript hangs and some elements simply never load. The device is too old to get OS updates, which means I can’t update some of the apps. But, that’s a good thing because those old apps are still very responsive. The apps I can update are getting slower and slower all the time.

It’s the pages. It’s all the JavaScript. And especially the HTML5 stuff. The amount of code that is executed in a webpage these days is staggering. And JS isn’t exactly a computationally modest language.

Of the 200kB loaded on a typical Wikipedia page, about 85kb of it is JS and CSS.

Another 45kB for a single SVG, which in complex cases is a computationally nontrivial image format.

I don’t agree. It’s both. I’ve opened basic no JS sites on old tablets to test them out and even those pages BARELY load

What caused the latency in that case?

Probably just the browser itself, considering how bloated they’re getting. It’s not super surprising, considering the apps run about as fast (on a good day) as it did 5-10 years ago on a new phone, it’s gonna run like dogshit on a phone from that era.

I can’t update YouTube on my iPad 2 that I got running again for the first time in years. It said it had been 70,000~ hours since last full charge. I wanted to use it to watch videos on when I’m going to bed. But I can’t actually login to YouTube because the app is so old and I seemingly can’t update it.

I was using the web browser and yeah I don’t remember it being so damn slow. It’s crazy how that is.

Computer speed feels about the same as it was years ago.

Uh, that’s a bit off to be fair.

Our computers are 15x faster than they were about 15-20 years ago, sure…

But 1, the speed is noticeable and 2, not all the new performance is utilised 100%.

Sure, operating systems have started utilising the extra hardware to deliver the same 60-120fps base experience with some extra fancy (transparency with blur, for example, instead of regular transparency), but overall computer UX has plateaued a good decade and half ago.

The bottleneck is not the hardware or bad software but simply the idea of “why go faster when going this speed is fine, and we now don’t need to use 15-30% of the hardware to do it but just 1-2%”.

Oh and the other bottleneck is stupidity, like letting long running tasks onto the main/UI thread, fucking up the experience. Unfortunately, you can’t help stupid.

No, the bottleneck is fucking electron apps.

why do anime girls have to be right all the time?

They are all actually the start of an incredibly complex dojinshi.

I sure hope the tags are wholesom… OH NO.

To prevent fictionalist comments in replies.

On Linux it really is noticeable

Well, until you open a browser… or five, because these days nobody wants to build native applications anymore and instead they shove webapps into electron containers.

Right now, my laptop doesn’t have to run much. Just a combination of KDE, browser, emails, music player, a couple of messengers and some background services. In total, that uses about 9.5 GB of RAM. 20 years ago we would have run the same workload with less than 1 GB.

Yeah, discotd is eating 1.5gb of ram. Actually crazy

I recently had occasion to run a headless Linux distrk and got my socks knowcked off when I ran top and saw idle RAM use at fucking 400 MB

whomst is jeavon

https://en.wikipedia.org/wiki/Jevons_paradox

Whenever efficiency increases consumption increases. Better steam engines mean more coal consumption. Faster cheaper RAM means more RAM consumption.

This entire thread is a perfect example of the paradox folks keep mentioning:

Nobody in both 🧵s pointed out that Ocean used Mastodon to post the banter with.

Plenty more optimized federated slop software in the market.I am also on Jabber, if it means anything to Zoomies.

When you become one with the penguin, though … then you can begin to feel how much faster modern hardware is.

Hell, I’ve got a 2016 budget-model chromebook that still feels quick and snappy that way.

But… 2016 was a decade ago. If it feels quick and snappy that way that means the post is right.

Which it kinda isn’t but hey.

the point is the software is what’s wrong, not the hardware. it feels snappy because it’s linux, not because it’s hold hardware.

Except the Linux userbase has been saying that exact thing for the past ten years, so again, has Linux also degraded in sync or, hear me out here, is this mostly a nostalgia thing that makes you forget the cludgy performance issues of the software you used when you were younger and things have mostly gotten snappier over time across the board?

As a current dual booter I’ll say that Windows and Linux don’t feel fundamentally different these days, for good and ill. Windows has a remarkably crappy and entirely self-inflicted issue with their online-search-in-Start-menu feature, which sucks but is toggleable at least. Otherwise I have KDE and Win11 set up the same way and they both work pretty much the same. And both measurably better than their respective iterations 10, let alone 15 or 20 years ago.

Windows and Linux don’t feel fundamentally different these days

Try Windows 11 vs. Linux on a shitty old laptop with a budget 2-core processor and 2GB of RAM. Then tell me Windows and Linux don’t feel any different.

My bf bought me a brand new laptop with Win 10 preinstalled, and even after disabling or uninstalling as much as I could, it was literally like watching a slideshow. Then I installed Linux, and it…worked like you’d expect a brand new computer to work, fast and smooth. Never used Win 11 because I stopped using Windows after that.

I’ve got a 2007 laptop that was shitty even for its time, and it does the job perfectly as a home server with Debian and a few good open source services I want to host

Sadly, it is not how it is for me. I’ve never (in last 20 years) experienced freezes that bad and that frequent as with my new beefy Linux PC.

For anyone unsure: Jevon’s Paradox is that when there’s more of a resource to consume, humans will consume more resource rather than make the gains to use the resource better.

Case in point: AI models could be written to be more efficient in token use (see DeepSeek), but instead AI companies just buy up all the GPUs and shove more compute in.

For the expansive bloat - same goes for phones. Our phones are orders of magnitude better than what they were 10 years ago, and now it’s loaded with bloat because the manufacturer thinks “Well, there’s more computer and memory. Let’s shove more bloat in there!”

Jevon’s Paradox is that when there’s more of a resource to consume, humans will consume more resource rather than make the gains to use the resource better.

More specifically, it’s when an improvement in efficiency cause the underlying resource to be used more, because the efficiency reduces cost and then using that resource becomes even more economically attractive.

So when factories got more efficient at using coal in the 19th century, England saw a huge increase in coal demand, despite using less coal for any given task.

Also Eli Whitney inventing the cotton gin to make extracting cotton less of a tedious and backbreaking process, which lead to a massive expansion in slavery plantations in the American South due to the increased output and profitability of the crop.

Case in point: AI models could be written to be more efficient in token use

They are being written to be more efficient in inference, but the gains are being offset by trying to wring more capabilities out of the models by ballooning token use.

Which is indeed a form of Jevon’s paradox

Costs have been dropping by a factor of 3 per year, but token use increased 40x over the same period. So while the efficiency is contributing a bit to the use, the use is exploding even faster.

I think we’re meaning the same thing.

Yes, but have you considered if I just rephrase what you just said but from a slightly different perspective?

I always felt American car companies were a really good example of that back in the 60s-70s when enormously long vehicles with giant engines were the order of the day. Why not bigger? Why not stronger? It also acted as a symbol of American strength, which was being measured by raw power just like today lol.

This also reminds me of the way video game programmers in the late 70s/early 80s had such tight limitations to work within that you had to get creative if you wanted to make something stand out. Some very interesting stories from that era.

I also love to think about the tricks the programmer of Prince of Persia had employed to get the “shadow prince” to work…

I feel like this is Windows specific. Linux is rapid on PCs and my MacBook is absurdly quick.

Mint Xfce on my 2015 laptop compared to its previous system was the difference between usable and waiting 10 minutes for it to even boot, and things like gaming, VMs, comically large spreadsheets (surprisingly the memory hog), etc., were an eternal challenge on it. On my current laptop, I have the luxury of picking the systems by aesthetics and non-optimization functions instead. And to compare, I’ve run even the same updates on the two laptops, as the older one still works.

My 12? 13? Year old Dell laptop does just fine running Ubuntu. It’ll probably be fine for my needs for another 3 or 4 years at least.

App launch time can be annoyingly slow on mac if you’re not offline or blocking the server it phones home to

it can be the difference between one bounce or seven bounces of the icon on my end

What apps out of interest? I’m a new Mac owner, so limited experience, but everything seems insanely quick so far. Even something like Xcode is a one-bounce on this M4 Air.

All of them. The device has to phone home to apple to ask permission to run them.

to test close app (really shut, make sure dot on icon isnt glowing) then open and measure time

close app and then disconnect from the internet and launch again

the speed difference depends on how overloaded apples servers are.

I’ve not come across this but I’ll check it out. Is that App Store apps only?

I think probably 90% of the apps I’ve installed have been through the homebrew package manager which likely means they don’t do any phoning home, but I’ll check out the pre-installed stuff and see if I can replicate.

PC games are software.

Unfortunately many PC games are also like this, astoundingly poorly optimized, just assume everyone has a $750 GPU.

Proton can only do so much.

… and Metal basically can’t do that that much.

Look at Metal Gear Solid 5 or TitanFall 2, and tell me realtime video game graphics have dramatically increased in visual fidelity in the last decade.

They haven’t really.

They shifted to a poorly optimized, more expensive paradigm for literally everyone involved; publisher, developer, player.

Everything relating to realtime raytracing and temporal antialiasing is essentially a scam, in the vast majority of actual implementations of it.

I guess the counter argument for games is load times have dramatically improved, though that’s less about software development than hardware improvements.

If we put consoles in the same bracket as computers, the literally instant quick-resume feature on an Xbox (for example) feels like sci-fi.

Yeah, you kinda defeated your own argument there, but you do seem to recognize that.

You can instant resume on a Steam Deck, basically.

You can alt tab on a PC, at least with a stable game that is well made and not memory leaking.

Yeah, better RAM / SSDs does mean lower loading times, higher streaming speeds/bus bandwidths, but literally, at what cost?

You could just actually take the time to optimize things, find non insanely computationally expensive ways to do things that are more clever, instead of just saying throw more/faster ram at it.

RAM and SSD costs per gig are going up now.

Moore’s Law is not only dead, it has inverted.

Constantly cheaper memory going forward turned out to not the best assumption to make.

Anyone opening the app menu (from the dock or Home Screen) on an iPad will tell you that it’s not exclusive to windows pcs.

phones.

Using a smartphone from 5 years ago with modern updates feels worse than the first iPhone.

The problem is not so much badly written programs and websites in terms of algorithms, but rather latency. The latency of loading things from storage, sometimes through the internet is the real bottleneck and why things feel so slow.

even with mothern ssds, things sometimes feel slower than what they were with hdds with time accurate software, windows 7 was snappy even on a hdd, windows 11 is slow and sluggish everywhere

That’s some nonsense, though.

For one thing, it’s one of those tropes that people have been saying for 30 years, so it kinda stops making sense after a while. For another, the reason it doesn’t make sense is it doesn’t account for modern computers doing more now than they did then.

In 2016 I had a 970 that’s still in an old computer I use as a retro rocket, and I can promise you that wonderful as that thing was, I couldn’t have been playing Resident Evil this week on that thing. So yeah, I notice.

And I had a Galaxy S7 then, which is still in use as a bit of a display and I assure you my current phone is VERY noticeably faster, even discounting the fact that its displaying 120fps rather than 60.

Old people have been going “things were better when I was a kid” for millennia. I’m not assuming we’re gonna stop now, but… maybe we should.

It tracks that the guy I saw raging about linux more than once is defending bloat lol

it doesn’t account for modern computers doing more now than they did then.

We know they do more, but most of that “more” is bullshit bloat and sloppy engineering, neither of which we asked for.

When I boot up Windows 11 and start no programs, somehow there’s already over 8Gb RAM already consumed, the CPU shows 8% utilisation, there’s 244 processes running, and it still took every bit as long to get there as my Windows 7 machine from 2011. That’s what the rest of us are talking about.

When was the last time you booted a 2011 machine? Because man, is that not true.

And that’s a 2016-2017 era PC.

Windows 7 didn’t even have fast boot support at all. I actively remember recommending people to let their PCs sit for a couple of minutes after booting so that Windows could finish whatever the hell it was trying to do in the background faster instead of clogging up whatever else you were trying to do.

Keeping my old hardware around compulsively really impacts my perception of this whole “things were better when I was a teenager” stuff.

I very specifically said “my windows machine from 2011” because it’s still in use.

Then you’re either lying about it or haven’t booted a newer PC. Fast Boot was a back of the box feature for Windows 8 for a reason. It was becoming a huge meme at the time how slow Win7 was to boot.

If your 2020s PC with Windows 11 is taking 45 seconds to boot on the Windows logo like Win 7 does (as seen in the benchmarks above) then you need some tech support because something is clearly not working as expected. I don’t think even my weaker Win11 machines take longer than 10 secs from boot starting to the password screen.

That may be true anyway, because the tiny hybrid laptop I’m using to write this is reporting 2-5% CPU utilization even with a literal hundred tabs open in this browser. So… yeah, either you have a knack for hyperbole or something broken.

Neither of them are fresh out the box clean installs, they are what they are.

But anyway, I’ve been trying to engage in good faith but you keep being obnoxious on every single post you make, so it’s Block time

Hah. You do you. I get how it’d be obnoxious to be called out, but man, it’s not my fault that you chose the worst possible example for this. Like, literally the worst iteration of Windows for the specific metric you called out, in a clearly demonstrable way that a ton of people measured because it was such a meme.

You can block me, but “they are what they are” indeed.

Incidentally, this is a classic opportunity to remind people that blocking on AP applications sucks ass and the only effect it has is for the blocker to stop being able to see what the blockee is saying about them while everybody else still gets access to both. Speaking of software degradation, somebody should look into that.

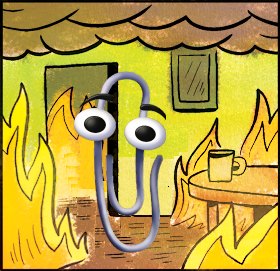

The program expands so as to fill the resources available for its execution

– C.N. Parkinson (if he were alive today)